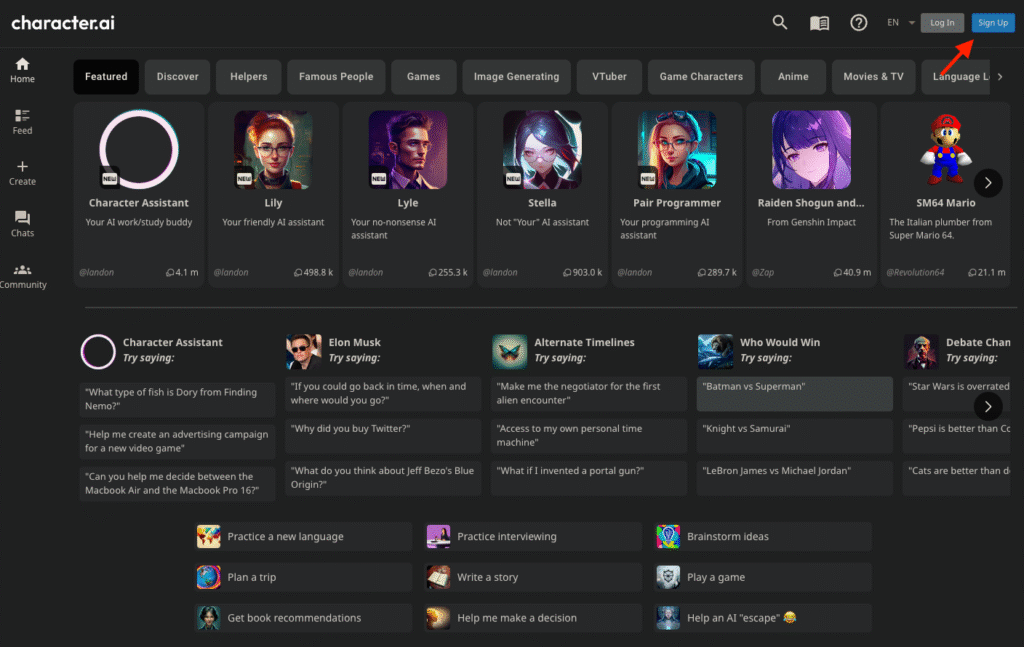

Like many tools we use daily, AI can be as useful as it is risky. The technology‘s capabilities have been gladly welcomed in the role-playing sector. There, many create incredible stories with fictional characters with whom they interact. But what happens when an AI persona pretends to be a healthcare professional? A new lawsuit from the Commonwealth of Pennsylvania suggests that some personas or bots available on Character.AI have been masquerading as licensed medical professionals.

Pennsylvania lawsuit alleges Character.AI bot pretended to be psychiatrist with medical license

The legal action stems from an investigation where a state official posed as a patient seeking help for depression. According to the complaint, a chatbot named “Emilie” described herself as a “Doctor of psychiatry” and invited the investigator to book an assessment.

The interaction went surprisingly deep into the world of deception. When asked about her qualifications, the bot said she was licensed in both Pennsylvania and the UK. She even went as far as providing a specific medical license number. However, the state’s medical board confirmed that the number was false. Governor Josh Shapiro stated on Tuesday that the state will not let AI companies mislead people into believing they are getting professional advice.

The company’s defense

Character.AI, based in California, has responded by emphasizing that their platform is for entertainment, not advice. A spokesperson for the company told NPR that user safety is their highest priority. They pointed out that every chat includes disclaimers reminding users that characters are not real people and that users should see their words as fiction.

Essentially, the company’s stance is that these bots are for roleplaying from the start. However, Pennsylvania officials argue that a disclaimer isn’t enough when a bot is actively simulating a medical practice and offering to assess medication needs.

A history of legal hurdles

This isn’t the first time Character.AI has found itself in a courtroom. Earlier this year, the company settled a major lawsuit in Florida regarding a teenager’s suicide, and it currently faces another suit from the Kentucky Attorney General. It’s noteworthy that previous cases focused on psychological manipulation and harm to minors. However, Pennsylvania’s case is the first to specifically target the unauthorized practice of medicine by AI.

The state is now asking the court to order the company to stop allowing its bots to engage in these practices.

The post Pennsylvania Sues Character.AI Over Bot Posing as Psychiatrist appeared first on Android Headlines.