If you were to travel back in time to 1996 with a 2TB thumb drive, you’d be able to fit the entire World Wide Web on it.

Of course, that kind of storage didn’t exist in the ’90s, so it’s never been that simple for the Internet Archive. The nonprofit site, which launched three decades ago this year, went from making copies of the web on tape drives to storing more than 1 trillion pages worth of Internet history at data centers around the world. Using its Wayback Machine, anyone can look back to what a web page used to look like, which means you can browse through old GeoCities websites, view Google’s original Code of Conduct (back when it still said “Don’t Be Evil”), or read the EPA’s climate change indicators before the Trump administration scrubbed them.

All that’s on top of the Archive’s vast collection of other digital resources, from live concert tapings and public domain e-books to troves of forgotten DOS games. Roughly 2 million people access the site’s resources every day.

“We want it all,” says Brewster Kahle, the Internet Archive’s founder and chairman. “We want all the public works of human beings. So if we don’t have it, we want it.”

But while the Internet Archive hasn’t fundamentally changed over the years, the Internet itself is transforming in ways that jeopardize the nonprofit’s mission. Web publishers have started blocking the Wayback Machine out of fear that AI companies are scraping the material. A legal battle with book publishers ended with the Archive paying a settlement and removing more than 500,000 books from its collection. Meanwhile, the cost of storing humanity’s digital footprint keeps going up, as demand from AI data centers drives up storage and memory prices.

All of which makes Kahle wistful for how things used to be for the Internet Archive, before book publishers, tech giants, and the legal system got in the way.

“We have to still try to make a library work, even though it’s a difficult, difficult time for libraries,” he says.

The Internet Archive isn’t just a way to access old web pages, important as that may be. It’s also a repository for information and culture that anyone can access, download, and do what they please with. In a world where digital content is increasingly licensed rather than owned, that in itself seems like something worth preserving.

How it started

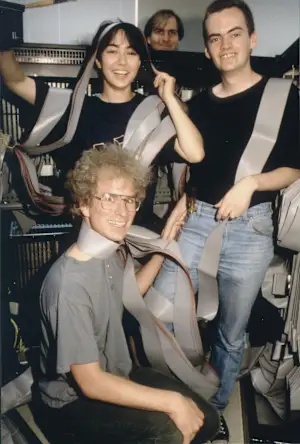

Kahle had been dreaming of something like the Internet Archive long before it became feasible. In the early 1980s, he studied AI at MIT and became a lead engineer on supercomputers at Thinking Machines. The modern internet wasn’t born yet, but he recalls imagining that these supercomputers would someday make reference materials readily available to anyone.

“For me, back in 1980, the idea was to try to build this thing that we’d long since promised by then, which was the Library of Congress on your desk,” he says.

The real epiphany, though, came in 1995 while Kahle was visiting the offices of AltaVista, one of the first Internet search engines. While early work on the internet had focused on decentralized protocols, AltaVista had built something useful by providing a hub of all the Internet’s knowledge. Kahle realized the same crawling technology could help make full copies of web pages for archival purposes, which AltaVista wasn’t interested in doing.

“I thought that the key was making sure that the works of humankind would be preserved, so we went off to collect it,” he says.

Kahle kicked in some of his own money to start the Internet Archive—he’d sold an early web publishing system called WAIS to AOL for shares worth $15 million, after spinning it off from his work at Thinking Machines—and got some help from outside backers.

But the real heavy lifting came from Alexa Internet, the for-profit traffic analysis company that he founded at the same time as the Internet Archive. For every web page that Alexa crawled, it donated a copy to the Internet Archive, and Kahle made sure that arrangement endured even after Amazon acquired Alexa for $250 million in 1999. Amazon quietly contributed to the Wayback Machine for more than 20 years, until it shut down Alexa Internet in 2021. (The Alexa name, which was based on the Library of Alexandria, lives on as the name of Amazon’s virtual assistant.)

“My hat is off to Amazon,” Kahle says. “They could have figured out how to get out of that contract, but they didn’t. So it really gave the Internet Archive, when it was a very young nonprofit, a content set.”

Running the Archive

The Wayback Machine was rudimentary at first, relying on simple automations to capture the code behind each webpage, preserving what they said and looked like at that moment. Over time, it’s become increasingly sophisticated, with new crawling engines aimed at capturing the growing complexities of the modern web.

These days, the Wayback Machine takes snapshots of roughly 1 billion URLs per day. It maintains copies of more than 1 trillion web pages, and stores 100 terabytes of new data per day in the process.

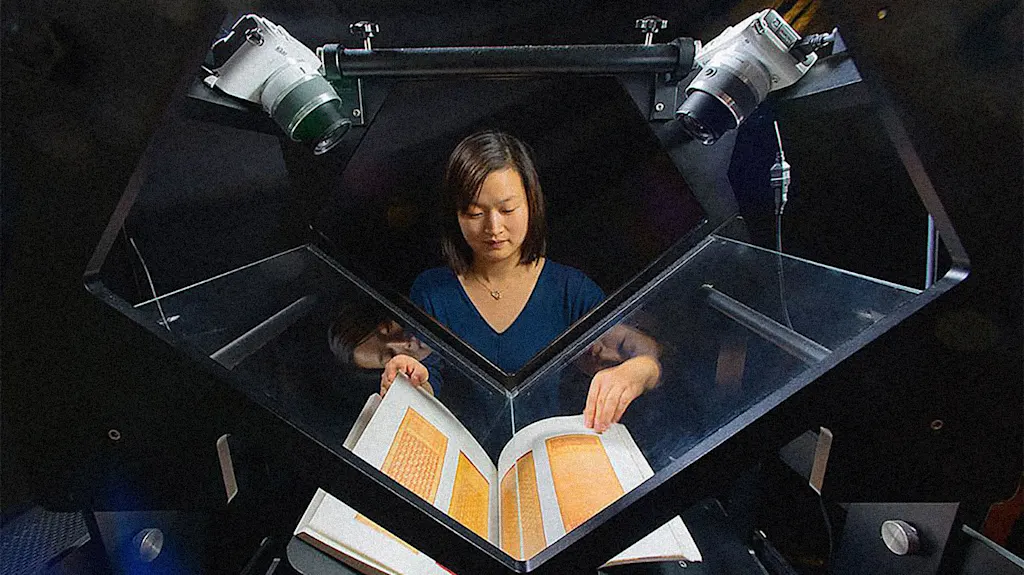

Still, Kahle says the Wayback Machine represents only about 60% of the Internet Archive’s data. The rest comes from its vast digital collections, including radio shows, podcasts, defunct mobile apps, DOS games, CD-ROM software, publicly available scientific research, scans of vintage magazines, classic TV shows, past cable news broadcasts, documents scanned from microfiche, and more. Both sides of the Internet Archive share the same computing resources.

Despite the scale at which it operates, running the Archive is a surprisingly human endeavor. While the site has tens of thousands of automated processes for archiving the web, its resources are ultimately limited, and it often needs to set priorities, says Mark Graham, the Wayback Machine’s director.

“Part of what I do every day is pay attention to this process, through conversations, through examining what we’re archiving and maybe what we’re not archiving,” Graham says.

Graham recalls a recent example in which the State Department revealed plans to delete its posts on X from before Donald Trump returned to office. He quickly spun up a project with his team and ultimately saved more than 2 million posts, hundreds of thousands of which have since vanished from their original URLs. Graham’s team has also made emergency copies of online publications whose shutdown is imminent, as he did recently with a prominent gaming site (which he declined to identify).

“We’re notified almost every day about certain web properties that are going to be shut down,” Graham says. “Often we’ll get weeks or months of advance notice, but sometimes we don’t.”

The Internet Archive doesn’t undertake all the work on its own. The group partners with more than 1,400 other groups, including libraries, universities, and museums that help decide what’s worth saving at any given time, and it operates a paid service called Archive-It for groups that want to maintain their own digital collections. Individual users can also archive pages manually through a web form or browser extension, and can even upload files for the Internet Archive’s digital collections.

“It’s a healthy mixture of different methodologies, different motivations, different agency,” Graham says.

Threats to the archive

For most of its existence, the Internet Archive hummed along without much conflict. That’s started to change over the past few years.

For the Wayback Machine, the web itself has become harder to archive. The Internet Archive doesn’t save paywalled articles, so it’s missing large swaths of content from major publishers.

“It’s gotten a lot harder to do a good job of archiving the public web, because more and more of the web is not public,” Graham says.

Some of those publishers have also started blocking the Internet Archive to prevent AI companies from scraping their content. Nieman Lab reported in January that 241 news sites explicitly block at least one of the Internet Archive’s crawling bots, most owned by the newspaper conglomerate USA Today Co. The French newspaper Le Monde has blocked the site as well, while The Guardian has filtered its articles from the main Wayback Machine interface. Reddit also began blocking the Internet Archive last year.

Graham says the Internet Archive employs a variety of tactics to turn away AI scrapers, but acknowledges that this requires “nearly constant care and feeding.”

Jack Cushman, director of the Harvard Library Innovation Lab, says publishers may be largely indifferent to the work of archivists, at least compared with the more immediate threat of AI repurposing content or putting a strain on their servers. (Cushman’s lab has developed its own archiving tool, called Perma.cc, that it offers to individuals and institutions.)

“The upshot is that the doors are slamming shut, incidentally keeping us out, when they don’t really care about us in the first place,” Cushman says.

Meanwhile, AI is posing a threat in another way, in that demand from AI data centers is driving up the cost of storage. Kahle says the Internet Archive’s hard drive costs have already tripled to quadrupled as result.

“We’re going to have to start becoming really clever about how to go and continue to archive,” he says.

And as the cost of storage is going up, a growing proportion of what people consume online involves video on sites like YouTube and TikTok, taking up more space than static images and text. That means the Internet Archive must become even more selective about what it saves. Its YouTube collection is only in the millions of pages, versus more than a trillion web pages overall.

“There’s other cases where there is just so much material on a given platform or service that we don’t have the capacity,” Graham says.

Outside the realm of archiving the web, the Internet Archive’s digital collections have become a source of legal trouble. Book publishers sued the group in 2020, after it started lending out digital scans of physical books as a response to the COVID-19 pandemic. That resulted in an undisclosed settlement and the removal of 500,000 books from the Internet Archive’s collection. The group also settled a separate record label lawsuit over its collection of digitized 78 rpm records, though those remain available.

Cushman says that those lawsuits have drawn attention to the well-intentioned risks that archivists take with copyrighted material. While the Internet Archive has typically avoided things that might upset copyright holders, that’s started to change in recent years.

“They’ve moved into some things—especially with the pandemic—that really did anger some people with deep pockets, and great lawyers, and so on,” he says. “It makes the edifice a bit tippier in a way that I think that no one would have wanted.”

Kahle and the Internet Archive see those lawsuits as a major detriment to its mission, one that further moves all content consumption to a model of licensing and surveillance, rather than ownership.

“The United States has just kind of descended into just lawsuits, where in the ’90s, the United States was interested in innovation, and having a game with many winners,” Kahle says.

The Internet Archive remains an indispensable resource, Cushman says, one that’s regarded among archivists as something of a benevolent monolith. There’s a playfulness in how it operates—for instance, in offering a playable collection of LCD gaming handhelds—that no one else is doing. But its challenges also make him wish there were more organizations trying to do similar things.

“It’s different from anything else that we have,” Cushman says. “So I think we look at it with a mix of gratitude, where we’re fortunate that it happened, and then apprehension because there’s only one of it.”

Looking ahead

Kahle built his life’s work around digitizing the world’s knowledge and even using AI to make it more accessible. Now that future is finally materializing, but in a way that is, ironically, concentrated around a handful of well-funded tech companies, media conglomerates, and publishing giants. As a young engineer, that possibility was never on his radar.

“I didn’t predict the monopolies,” he said.

Kahle still sees AI as an opportunity to sort through the Internet Archive’s vast stores of data. Researchers are already using it, for instance, to do things like interpret key talking points on Russian newscasts, and the Internet Archive has been leaning on AI to help digitize and translate more content.

But those opportunities, he says, are increasingly happening outside the United States, where there’s more legal certainty around what libraries can collect and digitize. The European Commission, for instance, is pursuing the concept of AI for the public good, promoting tools that tackle specific challenges like climate change and health care. The Internet Archive Europe, a separate group on which Kahle is a board member, has been backing a open-source tool called ClimateGPT that applies large language models to climate research.

“There could be hundreds of innovative organizations going and conquering all sorts of niches, if they had the same kinds of policies in the United States that we had in the 1990s when we let search engines happen here,” Kahle says.

Still, Kahle says he’s not discouraged, because fundamentally people want their works to be read and preserved. They also want good information that’s easily accessible, which is why the Internet Archive is being used now more than ever.

And while the Internet Archive was born from the idea of centralizing the world’s knowledge, lately it’s been sponsoring conferences on ways to decentralize the web again. It’s early days, but he’s hopeful that this will lead to new business models that recapture what once seemed possible 30 years ago.

“Let’s build systems that support communities,” Kahle says. “Let’s make tools for participation. Let’s build democracy’s library out of all the works that can and should be shared, so we’re all building on a common commons of information.”